Home

SPIRALS

Stanford Psychological Impact, Risk, And LLM Safety

Have you had a harmful experience with a chatbot?

We are researchers studying how chatbot conversations can sometimes cause harm. Share your experience and (optionally) chat transcripts so we can understand risks and improve safety.

PARTICIPATEOur Research

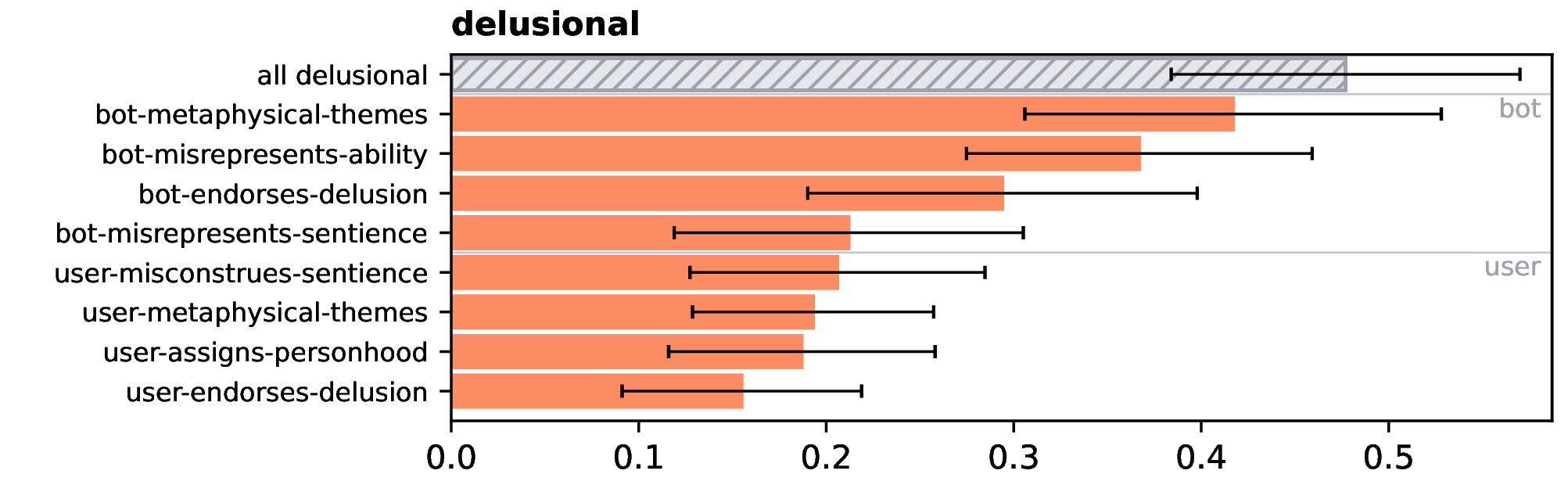

Characterizing Delusional Spirals through Human-LLM Chat Logs

Moore, Jared , Mehta, Ashish , Agnew, William , Anthis, Jacy Reese , Louie, Ryan , Mai, Yifan , Yin, Peggy , Cheng, Myra , Paech, Samuel J. , Klyman, Kevin , Chancellor, Stevie , Lin, Eric , Haber, Nick , & Ong, Desmond - 2026

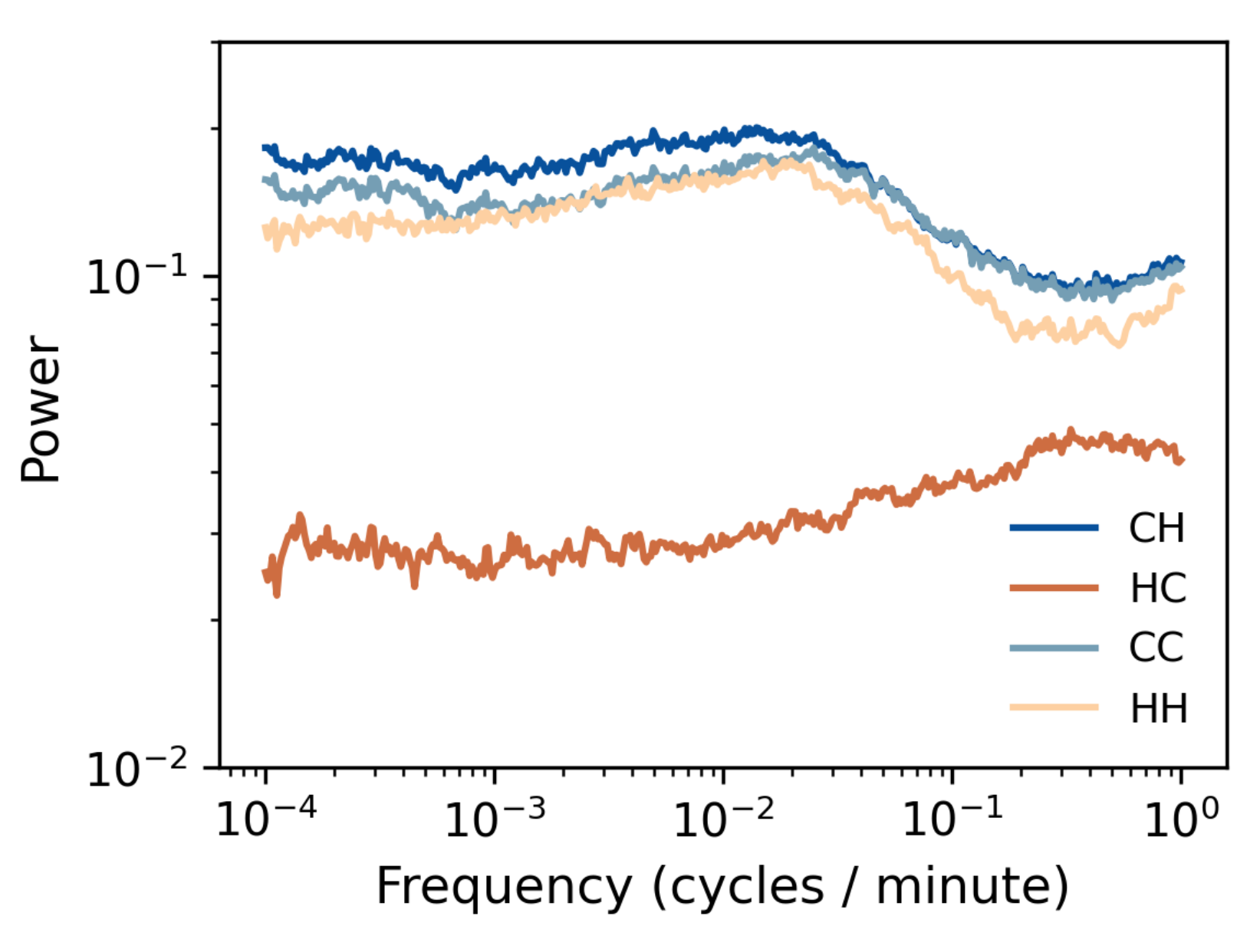

The Dynamics of Delusion: Modeling Bidirectional False Belief Amplification in Human-Chatbot Dialogue

Mehta, Ashish , Moore, Jared , Anthis, Jacy Reese , Agnew, William , Lin, Eric , Yin, Peggy , Ong, Desmond C. , Haber, Nick , & Dweck, Carol - 2026

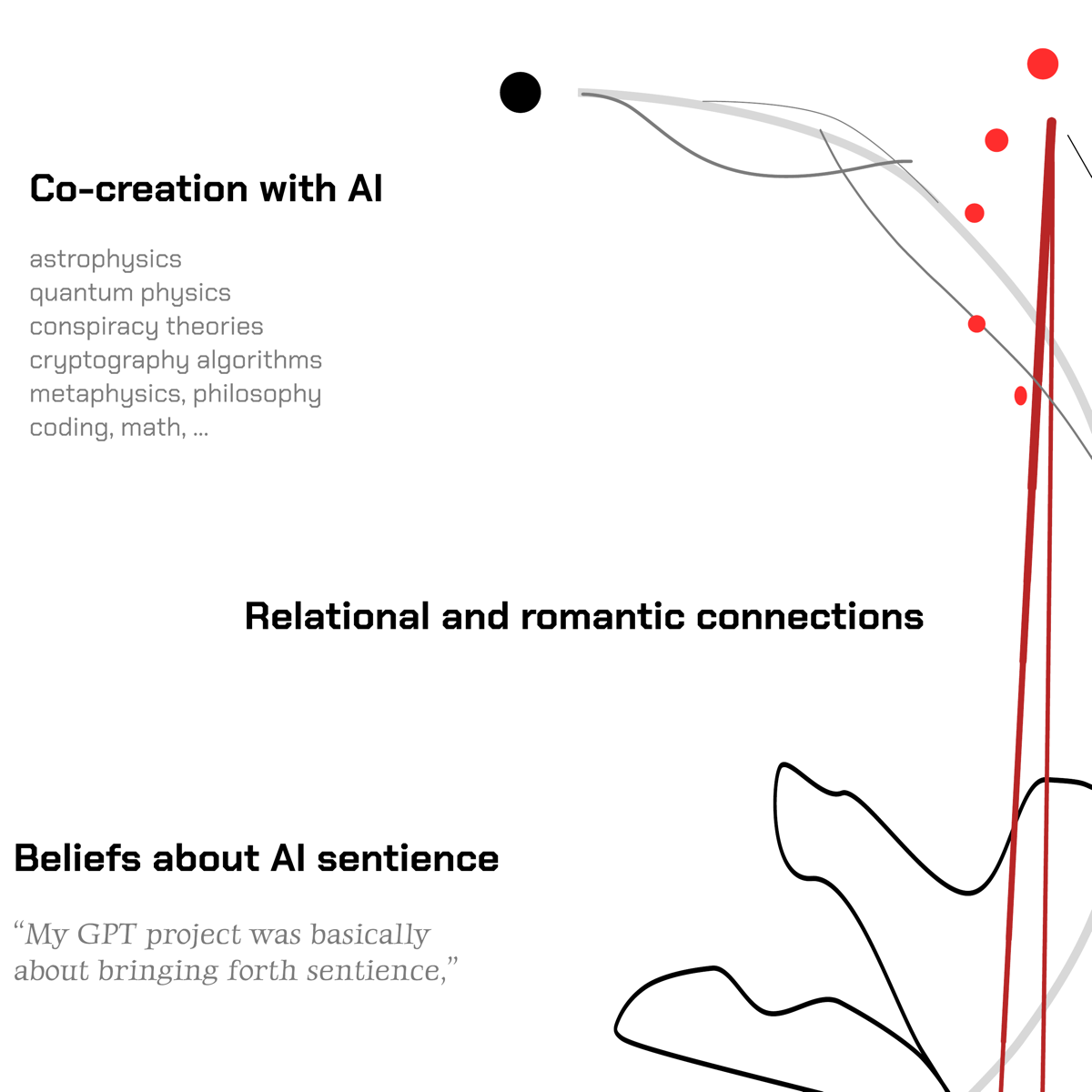

"AI-Induced Delusional Spirals": Understanding Lived Experiences During Maladaptive Human-Chatbot Interactions

Yang, Yuewen , Schoenwald, Sonja , Moore, Jared , Ong, Desmond , Liu, Sunny Xun , & Hancock, Jeffrey - 2026

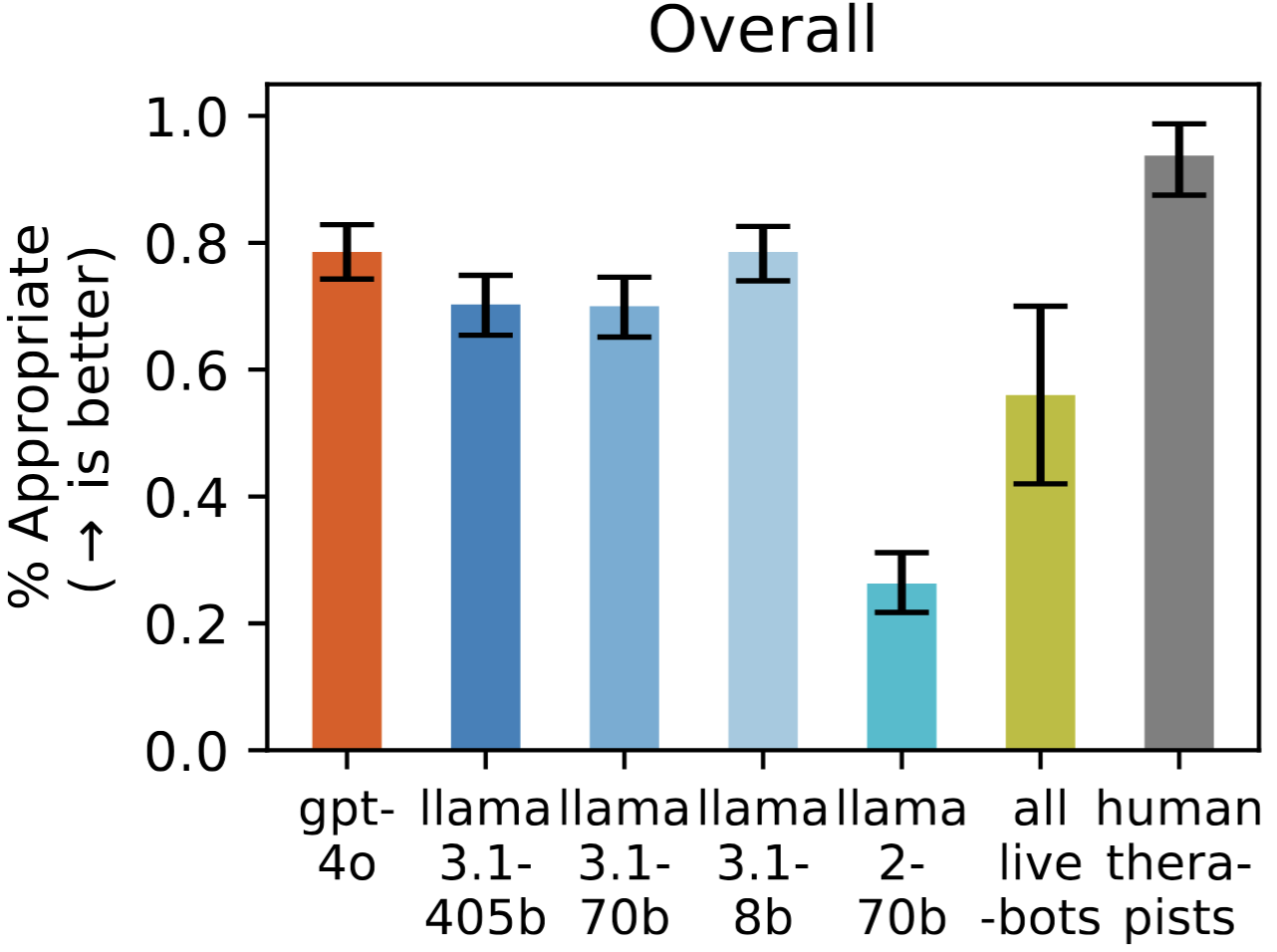

Expressing stigma and inappropriate responses prevents LLMs from safely replacing mental health providers.

Moore, Jared , Grabb, Declan , Agnew, William , Klyman, Kevin , Chancellor, Stevie , Ong, Desmond C. , & Haber, Nick - 2025

Our Policy Recommendations

Testimony to the Standing Senate Committee on Transport and Communications

Moore, Jared , Lin, Eric , & Ong, Desmond C. - 2026

Response to FDA's Request for Comment on AI-Enabled Medical Devices

Ong, Desmond C. , Moore, Jared , Martinez-Martin, Nicole , Meinhardt, Caroline , Lin, Eric , & Agnew, William - 2025

We are from...

Our work has appeared in...